Local ARC Challenge

Programming LLMAs a long-time fan of the Machine Learning Street Talk podcast, I listened to many researchers discussing the original ARC prize as well as the more challenging successor, ARC 2. However, I had never looked at any of the puzzles, much less attempted to design any solutions to solve them. I came across an interesting open-source implementation that claimed to get almost 98% on the ARC 2 public evaluation set. Surprisingly, the repo had very little fanfare, and I could not find much discussion around the validity of the claims, which were made by Confluence Labs, a YC-backed startup.

Like many approaches to solving ARC-style puzzles (at least according to the understanding I gained during my weekly ritual of mowing while listening to ML Street Talk), this approach relied on program synthesis. The main contributions from this repository seem to revolve around the agentic harness built around existing foundation models. Specifically, the authors frame the problems in a way that allows models to form testable hypotheses to allow the model to iteratively improve its understanding within an agentic harness to better handle the data sparsity inherent to the benchmark. With their state-of-the-art solver, the authors claim the 98% success rate at an impressive cost of $12 per task by leveraging Gemini.

I was interested not in validating the claims but rather determining what kind of success rate that I could achieve at an even more impressive cost of free. That is, could I borrow the novel ideas used in creating their harness to create a self-reflecting solver that ran exclusively on my local desktop machine? Obviously, the first requirement would be to downgrade from Gemini to an open-weight model that I could run on a single consumer GPU. The second requirement involved swapping out cloud sandboxes on e2b, which were used to safely run the LLM-generated code, to a series of containers that would run on my machine.

Qwen 3.5 Models

The timing of this repository popping in my feed fortuitously aligned with the announcement of the Qwen 3.5 models. At least, I thought the coincidence was fortuitous at the time, but more on that later. The Qwen 3.5 series of models showed very impressive benchmark performance, making them an obvious candidate for trying to solve reasoning-heavy puzzles. Thanks to the wonderful Unsloth, there were quantizations available on HuggingFace with aggressive enough compression to fit on my consumer graphics card. I explored the 35B-A3B model, which was an MoE model with 3 billion active parameters, at 3-bit quantization.

I learned that using the very cutting edge of open weight models is actually surprisingly challenging. At the time when the quantization was first available, neither SGLang or vLLM had support for the Qwen 3.5 architecture in any releases. While there was documentation to install each of these frameworks from source using the latest main branch, I encountered many challenges trying to get either framework installed with compatible package versions for the Qwen 3.5 models. I was soon upgrading many packages from source (i.e., transformers), to support the latest and greatest features, but I continued to stumble into package version incompatibilities. Ultimately, I pivoted into using llama.cpp for model serving, and I was able to get an inference web server online with relatively little effort. After a few tweaks to the context length, I found a configuration that avoided both the OOM errors from CUDA and context overflow errors from the harness.

A few days after my initial model exploration, the Qwen 3.5 Small Model Series was announced, which opened up some new possibilities. If I was comfortable using quantization again, I could run multiple instances of the model in parallel to increase my throughput. Even with the largest 9B model, for example, I could fit two sets of quantized weights into my 24 GB of VRAM while still allowing for reasonable context limits. There is an interesting trade-off here, where the model performance is a bit worse with the smaller models but the ability to write programs and generate, test, and revise hypotheses would be hastened. While I was able to get this model online and processing parallel requests, I ran into a silent issue. The sliding window attention used in the small models was not yet supported by llama.cpp, which prevented any KV caching. The time spent regenerating the entire token sequence during long requests dwarfed any savings in throughput by running the model in parallel. And as you probably guessed, the style of hybrid attention used by these models did not yet have proper KV cache support in the available llama.cpp releases.

Initial Implementation

For these reasons, I ended up largely relying on the original approach with the Qwen3.5-35B-A3B model running in llama.cpp without parallel requests. I forked the original repository, and made changes in my fork to support local model inference as well as running code in local containers running in Docker. My implementation also allowed for parallel sandbox code execution, although this was much less beneficial since all of the code was produced by a single model running without parallelism. Feel free to explore my fork if you are interested in running the harness using local models. Likely there will be full support in the model serving framework of your choice by the time I get around to publishing this article, which should greatly simplify the setup process.

Evaluation

Due to my hardware limitations, I focused on solving a single problem in the ARC 2 benchmark set. As a spoiler, the sample problem was never completed successfully in my handful of attempts. However, there were some interesting traces made by a few agents, including one that produced a solution demonstrating a strong understanding of the problem and associated geometry. I believe that taking a careful look at the problem before walking through the logic sequence used by the model illustrates the power of the approach championed by Confluence Labs and allows us to build an intuition into similar strategies to compensate for historically data-inefficient learning objectives.

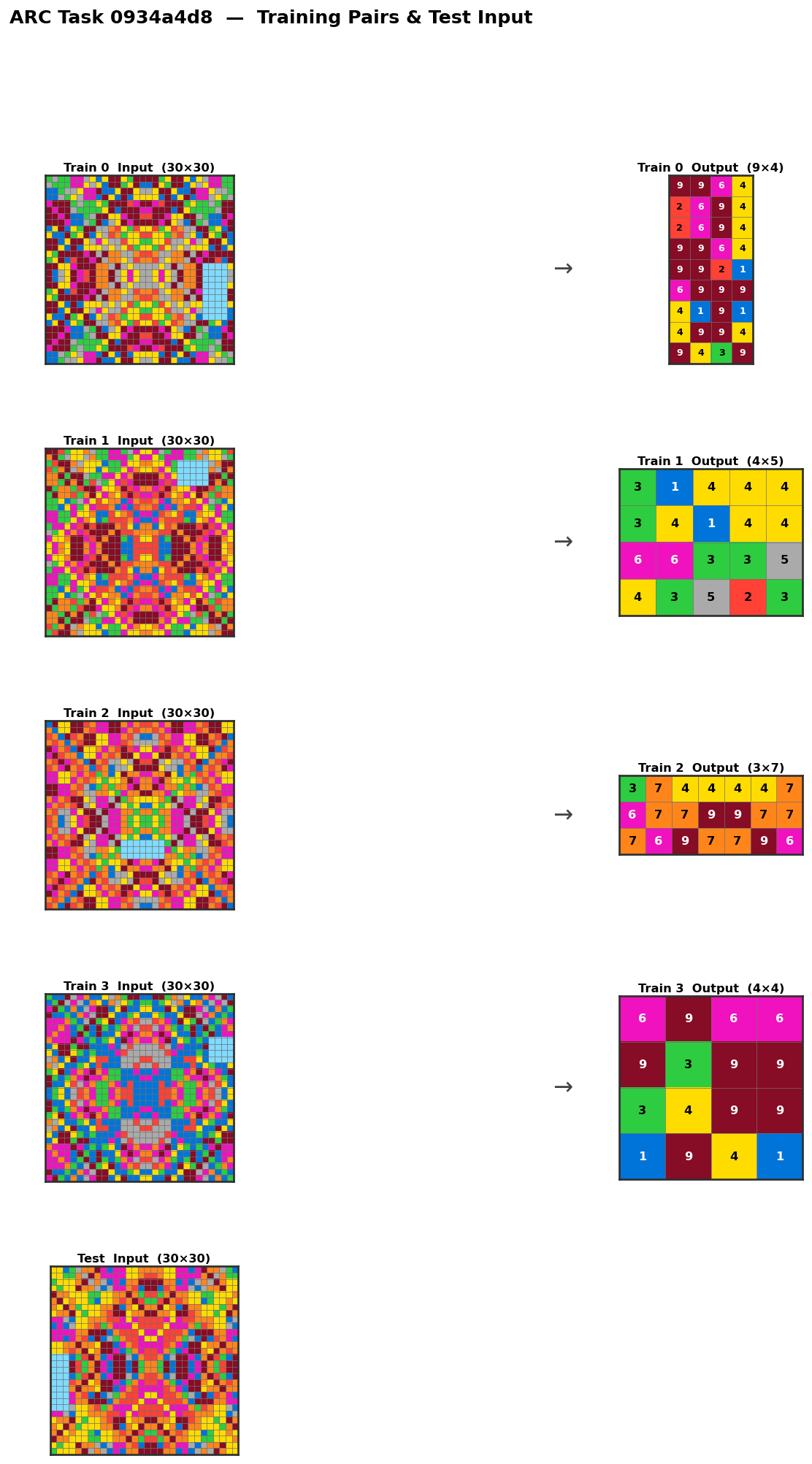

Training Input

After I found this interesting trace, I created a utility to visualize the ARC puzzles, the solutions created by the solver, as well as some of the hypothesis testing used by the harness. To begin let’s start with understanding the task description associated with this particular puzzle (task 0934a4d8). All of the inputs are 30x30 grids, with some rectangular region masked out (using the digit 8). Solving the task then requires imputing the correct pattern into this region. The data sparsity is clearly at play, since there are only four pairs with inputs and outputs from which the correct pattern must be extracted. Before we start looking at solutions, examine the inputs below to try to identify the pattern.

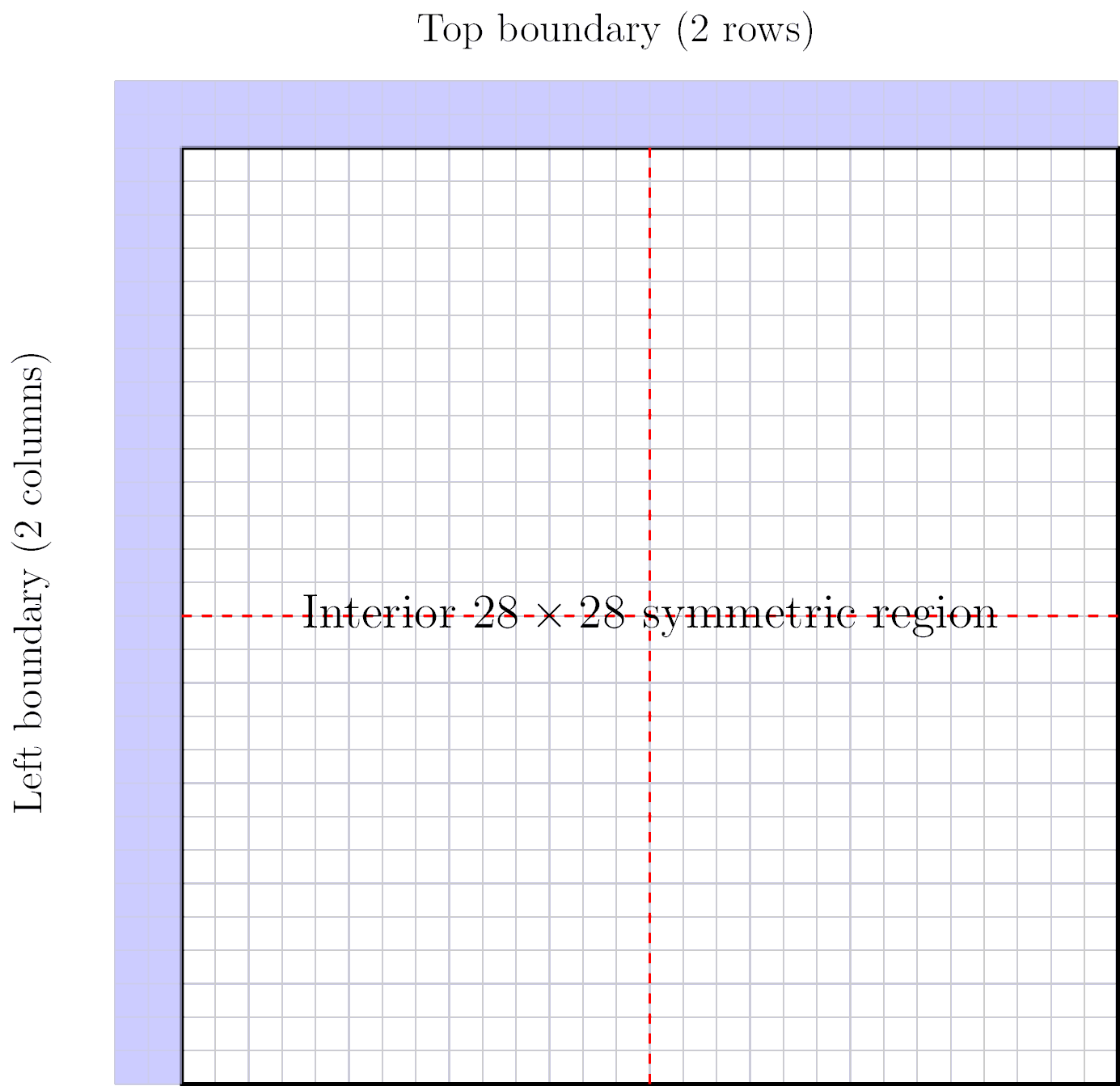

In case you struggled to see the pattern, the below figure illustrates the general symmetries present for this problem. There is a region with both horizontal and vertical symmetry that is offset from the center of the full grid. Determining the correct numbers within the symmetric region becomes trivial with this understanding, while determining the correct numbers outside this region requires a little more thought. Critically, note that all of the four input problems require imputing the numbers in the symmetric region while the test input requires the ability to fill in numbers outside this region.

Initial Attempt

Now that we understand the problem, let’s begin to examine how the model approached the problem. The first attempt by the model was this snippet:

import json

import numpy as np

def find_8s_region(grid):

"""Find the bounding box of 8s in the grid."""

rows, cols = np.where(grid == 8)

if len(rows) == 0:

return None

return {

"row_start": int(rows.min()),

"row_end": int(rows.max()),

"col_start": int(cols.min()),

"col_end": int(cols.max()),

}

def solve_example(input_grid):

"""Solve a single example by finding the pattern."""

# Find where 8s are

region = find_8s_region(input_grid)

if region is None:

return None

# The output appears to be from the mirrored position

# Mirror rows: if 8s are in rows r1-r2, extract from rows (H-1-r2) to (H-1-r1)

# Mirror cols: if 8s are in cols c1-c2, extract from cols (W-1-c2) to (W-1-c1)

H, W = input_grid.shape

# Extract from mirrored position

out_row_start = H - 1 - region["row_end"]

out_row_end = H - 1 - region["row_start"]

out_col_start = W - 1 - region["col_end"]

out_col_end = W - 1 - region["col_start"]

# Extract the region

output = input_grid[out_row_start : out_row_end + 1, out_col_start : out_col_end + 1]

return output

def main():

<boilerplate code>

if __name__ == "__main__":

main()

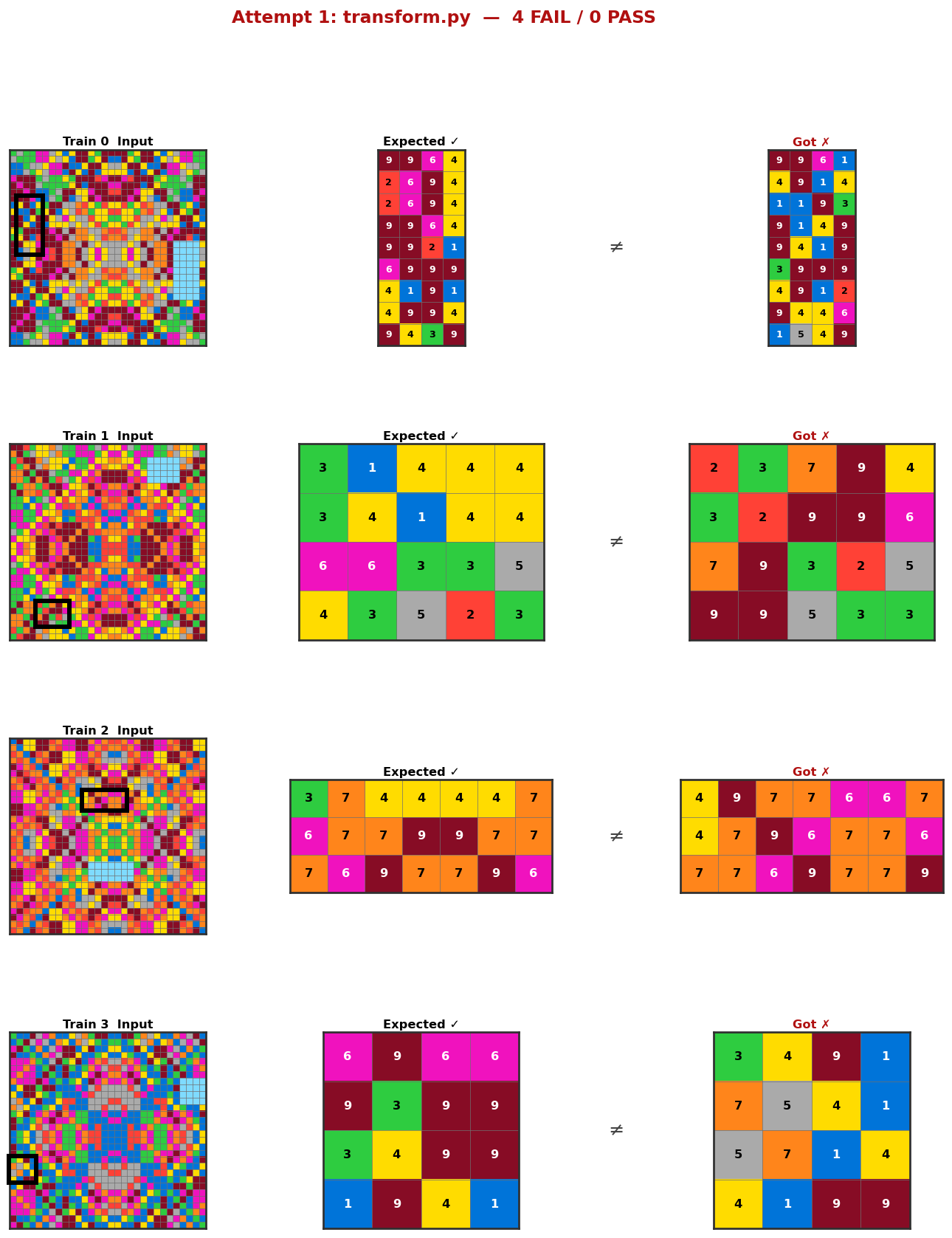

As we can see, the idea revolved around identifying the region containing the 8 cells, and then applying a mirroring of the position. The model correctly realized that there were some beneficial symmetries present, but the solution is not correct. We can visualize the output of running this algorithm on the available training inputs:

Hypothesis Generation

Here is where the approach gets interesting and the power of the reflective hypothesis-generation harness becomes apparent. Looking at the logs, we see mostly tool calls logged for reading the instruction or task file, writing proposed solutions to a python file, and shell commands to run the python files. Occasionally, however, we also see messages logged that indicate the generation of hypotheses, which are followed by dedicated tool calls to write code to test the hypotheses, which can then be modified or lead to additional ideas to test. Here is a version of the actual agent logs truncated to be readable at a high level:

{"type": "tool_use", "tool_name": "read_file", "parameters": {"file_path": "GEMINI.md"}}

{"type": "tool_result", "output": <output>}

{"type": "tool_use", "tool_name": "read_file", "parameters": {"file_path": "task.json"}}

{"type": "tool_result", "output": <output>}

{"type": "tool_use", "tool_name": "write_file", "parameters": {"file_path": "transform.py", "content": <code>}}

{"type": "tool_result", "output": "File written successfully: transform.py"}

{"type": "tool_use", "tool_name": "run_shell_command", "parameters": {"command": "python3 transform.py", "description": "Test the transform function against training examples"}}

{"type": "tool_result", "output": <output>}

{"type": "message", "role": "assistant", "content": "Let me analyze the pattern more carefully. Looking at the examples, I need to understand what the transformation is.", "delta": false}

{"type": "tool_use", "tool_name": "run_shell_command", "parameters": {"command": "python3 -c <hypothesis_code>}}

{"type": "tool_result", "output": <hypothesis_code output>}

{"type": "tool_use", "tool_name": "run_shell_command", "parameters": {"command": "python3 -c <hypothesis_code2>}}

{"type": "tool_result", "output": <hypothesis_code2 output>}

{"type": "tool_use", "tool_name": "run_shell_command", "parameters": {"command": "python3 -c <hypothesis_code3>}}

{"type": "tool_result", "output": <hypothesis_code3 output>}

{"type": "tool_use", "tool_name": "run_shell_command", "parameters": {"command": "python3 -c <hypothesis_code4>}}

{"type": "tool_result", "output": <hypothesis_code4 output>}

{"type": "tool_use", "tool_name": "run_shell_command", "parameters": {"command": "python3 -c <hypothesis_code5>}}

{"type": "tool_result", "output": <hypothesis_code5 output>}

{"type": "message", "role": "assistant", "content": "I see the issue - my function is named `solve_example` but should be named `transform`. Let me fix that and continue analyzing the pattern more carefully.", "delta": false}

{"type": "tool_use", "tool_name": "run_shell_command", "parameters": {"command": "python3 -c <hypothesis_code6>}}

{"type": "tool_result", "output": <hypothesis_code6 output>}

{"type": "tool_use", "tool_name": "run_shell_command", "parameters": {"command": "python3 -c <hypothesis_code7>}}

{"type": "tool_result", "output": <hypothesis_code7 output>}

{"type": "tool_use", "tool_name": "run_shell_command", "parameters": {"command": "python3 -c <hypothesis_code8>}}

{"type": "tool_result", "output": <hypothesis_code8 output>}

{"type": "tool_use", "tool_name": "run_shell_command", "parameters": {"command": "python3 -c <hypothesis_code9>}}

{"type": "tool_result", "output": <hypothesis_code9 output>}

...

{"type": "message", "role": "assistant", "content": "Now I understand the pattern. Let me update the transform function:", "delta": false}

So after reading in the required files and running the first solution, the assistant jumps in to direct the discrete program search by testing individual hypotheses. The code generated for each hypothesis targets a specific feature, and the comments written with the code contain detailed descriptions of the logic as well as assumptions. Eventually, the assistant has validated enough hypotheses to attempt to write a solution again.

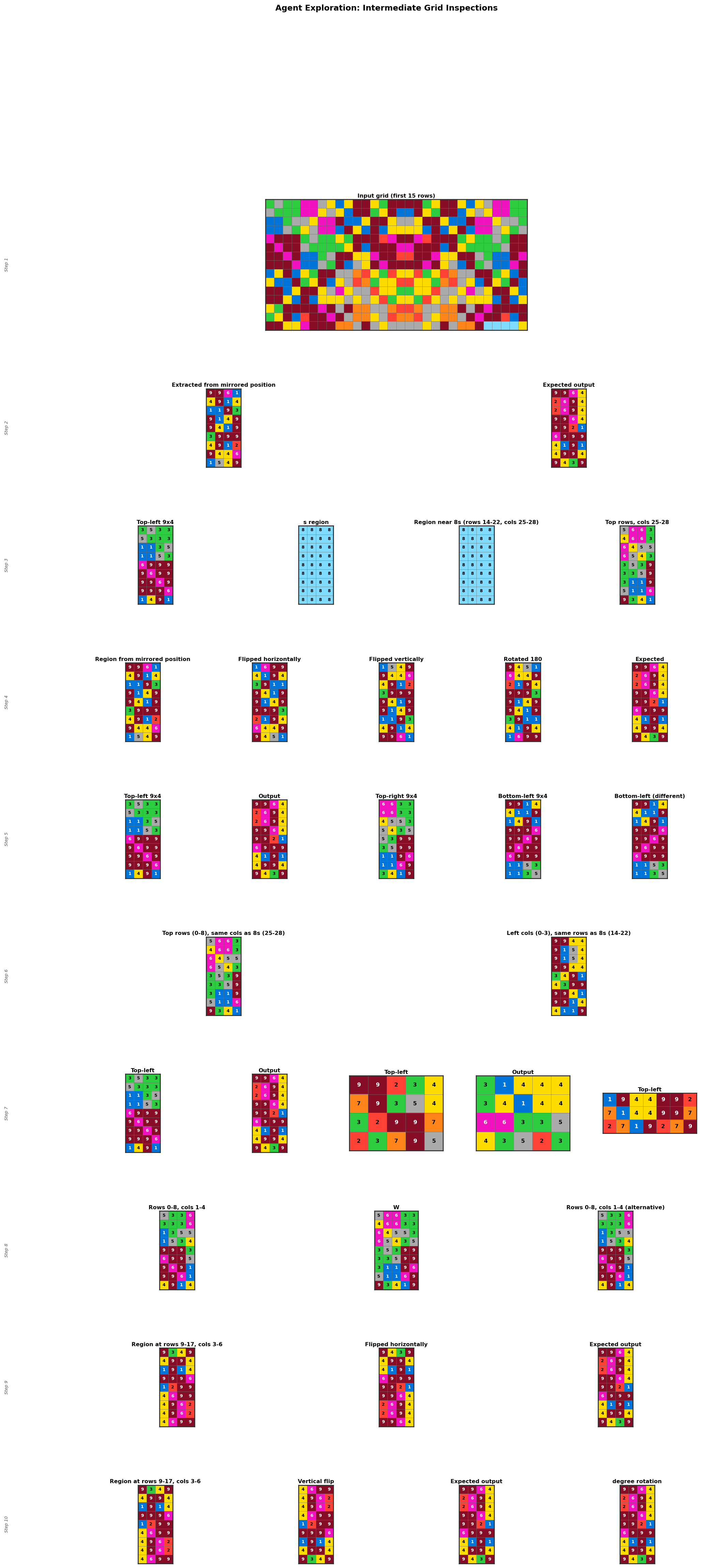

Visualizing all of the individual hypotheses is a bit challenging, but it’s much more digestible than reading through all of the associated code snippets. If you are curious, you can study the figure below to get an idea of the different regions and possible symmetries explored by the model. To summarize, here are the steps in the systematic exploration as synthesized by Claude Sonnet:

- Located the 8s bounding box in each training example — confirmed they form a solid rectangle of varying size and position.

- Tried extracting from the 180°-mirrored position (reflecting the 8s’ row/col range around the grid centre) — no match.

- Tried all four corners of the matching size — none matched.

- Tried horizontal flip, vertical flip, and 180° rotation of various extracted regions — still no exact match.

- Searched exhaustively over all possible subregion positions: found that a horizontal flip of the output exists somewhere in the grid, at rows 9–17, cols 3–6 for example 0.

- Then tested the 180° rotation of that same region — exact match.

- Derived the formula: the output position is

(H − r_max + 1, W − c_max + 1)relative to the 8s bounding box — i.e., the “opposite corner” of the grid. Extracting that region and rotating it 180° gives the correct output. - Verified the formula on all 4 training examples — all PASS.

Revised Attempt

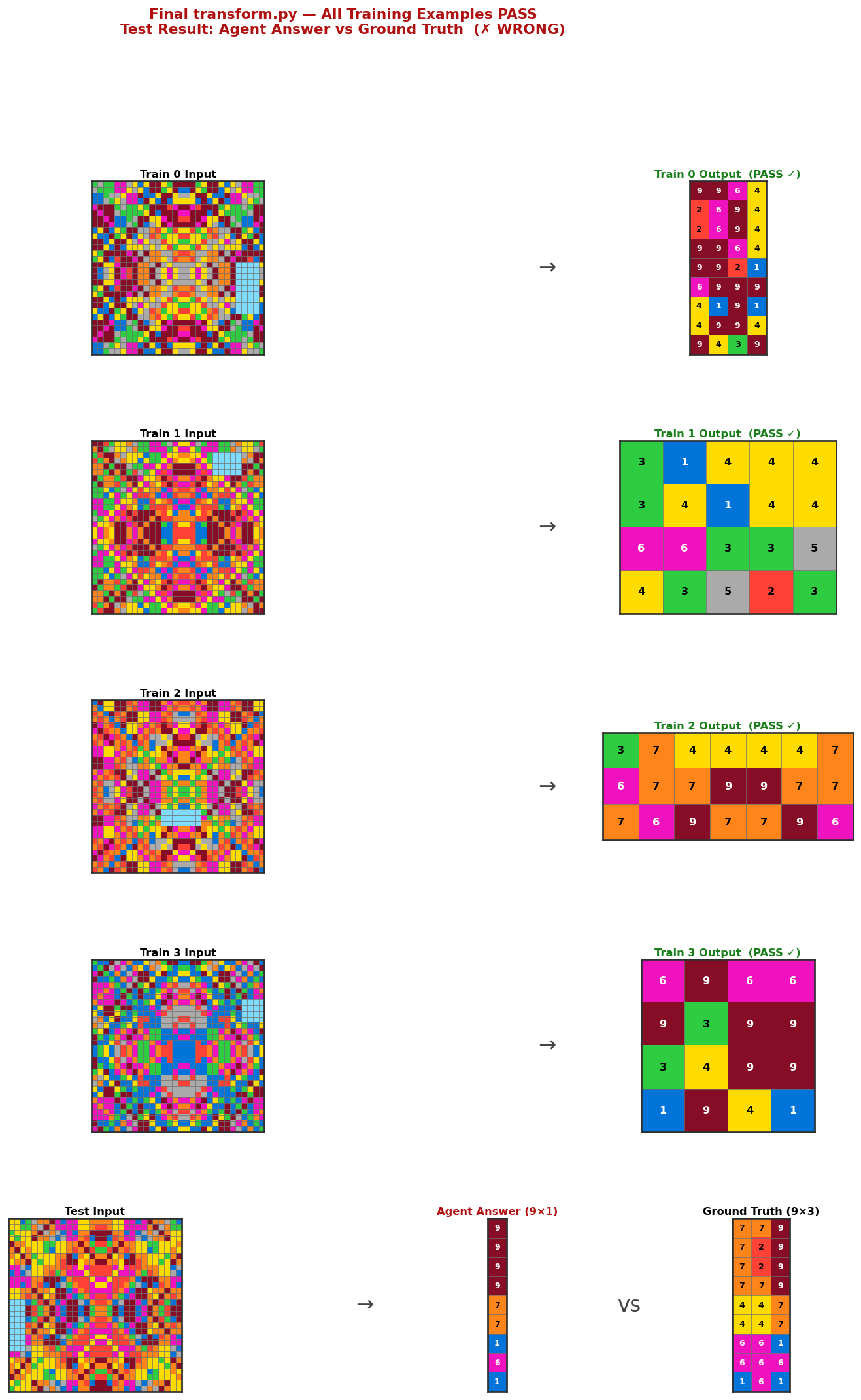

Since the model iterated through its hypotheses until it found one that allowed for passing all of the test cases, the assistant was confident in attempting to submit a solution again. The final solution correctly passed all of the training inputs, as expected, but suddenly failed on the test input. What happened? Looking closely at the logs, the submitted test output had a shape of 9x1, while the ground truth solution had a shape of 9x3. We can clearly see this mismatch in the visual artifact of the solution:

Why did the solution have the wrong shape? The answer goes back to the original symmetry of the class of inputs associated with this task. Remember that interior region in which the correct numbers could be trivially imputed with a basic understanding of the symmetry? It turns out that the solution discovered during the hypothesis testing only held for grid cells within this symmetric region. The right-most column of the masked region actually falls within this symmetric interior, and we can see that the solution correctly matches the ground truth. The discovered algorithm had some awareness of this shortcoming, since it did not even attempt to fill in the numbers in the other columns. The end result was that the submitted solution only contained numbers for this single column.

What’s Next

While the agent that we examined was not ultimately successful, it grasped the bulk of the relevant structure to correctly solve this problem. I could have run this experiment longer to see if one of the agents eventually was able to form a more robust hypothesis that did not fail as soon as the task expanded outside the symmetric interior. However, there is no guarantee that this would have been found quickly. Remember, after all, that we cherry-picked the most interesting agent trajectory for the above analysis. Most of the agents did not come nearly as close to picking up the correct structure of the problem. Since my interest was more in understanding the technique than trying to solve ARC 2, I was comfortable redirecting my graphics card to other tasks.

So truthfully there might not be any direct follow-up to this exploration. However, I do think that the approach taken by Confluence Labs of utilizing hypotheses to better ground program synthesis in data-sparse regimes has interesting applications far beyond ARC puzzles. Perhaps with the level of understanding that I was able to obtain into the methodology, I will recycle some of these ideas in some future application. Hopefully I was able to convey some level of that understanding to you if you made it this far.